Driving is the managed operation and motion of a car, an act that requires making steady selections, many of them instantaneously.

As we enter the age of autonomous automobiles, the query will not be whether or not an AI mind could make many vital selections instantaneously, however moderately, who will outline AI’s sense of proper and mistaken?

Who is chargeable for AI’s soul?

Programming autonomous automobiles for moral decision-making is a contemporary real-world problem. Unavoidable “dilemma situations” can’t be excluded, and automotive programmers should put together for them. However, a common ethical code for machine ethics and self-driving automobiles doesn’t exist.

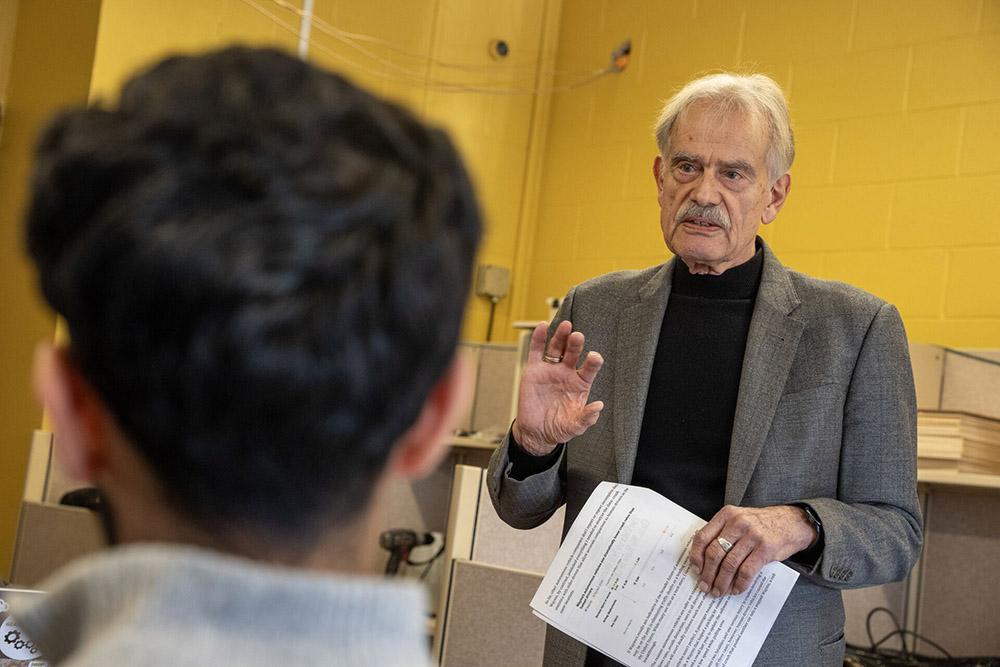

“We are now dealing with problems that become not only internal problems for engineering, but are also now becoming problems that society recognizes as critical questions they would like answers to,” mentioned Wolf Schäfer, professor emeritus in Stony Brook’s Department of Technology and Society.

Although moral theories reminiscent of libertarianism, utilitarianism and Kantianism can be found, the algorithmic implementation of any would appear arbitrary. The completely different ethical preferences present in Western, Eastern and different cultural clusters would additionally impede the design of a morally sound and globally legitimate car management system.

Schäfer, who has been main an Automotive Ethics VIP (Vertically Integrated Projects) staff since 2020, mentioned that addressing these issues is now vital and requires adjustments in engineering training, particularly acceptable AI design and instructing. Researchers predict that the shift to autonomous automobiles will take greater than a decade.

“We should use this time to plan the rapidly expanding AI sphere,” he mentioned, noting that within the U.S., there are about 40,000 deadly motorcar crashes per yr. “That’s more than homicide, plane crashes and natural disasters combined. Some estimates say that almost 10,000 crash victims come to the emergency rooms every day. That’s what cars driven by humans do. The social burden of injuries and fatalities in car crashes is just much too high.”

Schäfer mentioned his VIP challenge gives a singular alternative to combine topics like ethical philosophy into typical engineering courses, an rising want. He started constructing the lab in 2022, utilizing mannequin automobiles outfitted with cameras and sensors on a racetrack.

“We installed little signs with bar codes that instructed the cars what they were encountering, so when the cars approached a person or multiple people, they had to read the bar code,” he mentioned. “The students wrote the code in a way that the car was instructed to do certain things under certain conditions.”

Those circumstances included ethical dilemma conditions.

“We’ll need to distinguish between moral, immoral and rightful machines,” mentioned Schäfer. “’Immoral’ machines would be machines that do things that we consider immoral. ‘Moral’ machines are machines that follow certain ethical rules or conventions. And ‘rightful’ machines would be created by the societal certification of new technology to be allowed on public roads.”

The problem is that individuals should have interaction in discussions about moral questions.

“Engineering education is not there yet,” he mentioned. “It’s not like we have Newtonian or other physical laws, where we know that the value of gravity is not up for debate. It’s a universally recognized number. It doesn’t matter in which country or under which president. But with ethical rules, there’s variation.”

Schäfer mentioned that engineering applications are superb at instructing technical abilities, however lack the vital evaluative abilities that the humanities and social sciences provide.

“We’re running into problems that cannot be solved with just technical skills,” he mentioned. “And this is the new model for our department. AI is too important to be left to computer science alone.”

Engineering college students, mentioned Schäfer, might want to collaborate with humanities and social science students.

“The VIP program and our particular project point to a solution of engineering plus applied humanities and social sciences,” he mentioned. “That’s not only a great opportunity for engineering, but also a great opportunity for the humanities and social sciences.”

“I originally joined the Automotive Ethics Lab because I’ve been fascinated by autonomous vehicles for years, specifically with how companies like Tesla approach self-driving,” mentioned Ammar Ali ’26. “That interest became personal when I bought my own Tesla and started regularly using its self-driving features, which compelled me to directly contribute to the cause rather than simply observing it.”

Though the technical facet of the analysis lab appealed to him as a pc science main, Ali mentioned its give attention to the moral and humanistic constraints of know-how drew him in, aligning along with his ardour for integrating AI into on a regular basis life in a accountable method.

“I’m currently leading the development of a machine learning model that will serve as the ethical attention head within our simulation,” he mentioned. “Besides being able to use the most advanced computing resources of Stony Brook, this research is truly multidisciplinary, and it’s something that I view as far beyond the typical undergraduate level.”

Ali added that the skilled atmosphere established throughout the staff mimics real-world trade practices, giving it a head begin and setting expectations as college students transfer nearer towards their skilled careers. “In addition to expanding my technical skills, particularly in AI/ML, I also hope to build on my leadership experience. Ultimately, I aim to influence how intelligent systems are built, and the Automotive Ethics Lab is preparing me to do so.”

Schäfer describes himself as a historian of science and know-how that will classify as a social scientist. That background has offered him with a eager sense of change and a mix of technical and societal understanding.

“To contemplate AI does not necessarily require the singular disciplinary skills that were the requirement for so long,” mentioned Schäfer, who famous that this yr, the Department of Technology and Society will change into the Department of Technology, AI and Society. “Cars today are computers on wheels. But these computers on wheels have great computing capabilities, and they can do a lot more in a short time. Humans couldn’t react that quickly. But the AI in a car can recognize that it has options in a dilemma situation. And if we have options, those are all either political or moral choices.”

It’s these selections that current AI with what Schäfer describes as “no clear-cut way to go,” one requiring moral deliberation to determine what can be the easiest way.

“2000 years of philosophy have not produced a universal moral theory,” he mentioned. “They’ve produced a lot of very good things, but we do not have the equivalent of Newtonian laws. We have theories. Depending on how it’s programmed, not every AI-driven car would make the same decision. We are the ones who will try to get as close as we can in terms of translating philosophical theories into algorithms. Engineering has become too important to be left to the engineers.”

— Robert Emproto