The total framework and core module design of the model

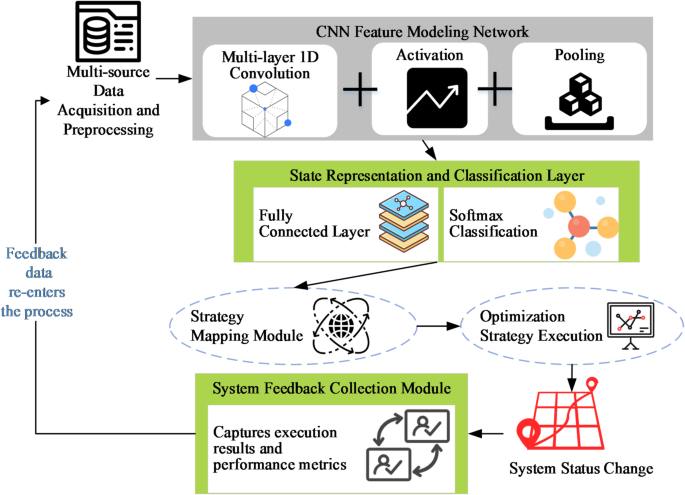

To understand environment friendly optimization and clever decision-making of information system in dynamic setting, this research designs a decision support model pushed by NCS. The choices in query primarily embody three classes: job rescheduling, useful resource reallocation, and early anomaly warning. These are aimed toward bettering the general efficiency, useful resource utilization, and stability of information techniques. This model kinds an entire closed-loop construction with the framework of “data perception-state modeling-policy generation-system feedback”. The particular construction is proven in Fig. 1.

In the model design proven in Fig. 1, the NCS-driven framework is tailor-made to deal with massive volumes of heterogeneous information generally present in information techniques. These embody structured efficiency metrics, time-series operational logs, and semi-structured sensor standing information. A unified information acquisition and integration module is applied to standardize these various information sources right into a constant enter stream. To meet the enter format necessities of NCS modeling, a sliding window mechanism is utilized to section the uncooked time-series information. Suppose the unique time sequence is:

$$:X={{x}_{1},{x}_{2},…,{x}_{T}}$$

(1)

Every time level (:{x}_{t}in:{mathbb{R}}^{d}) represents a d-dimensional function vector. X represents the unique remark sequence of the system within the time dimension, with a complete size of T. This illustration organizes the discrete time-series information right into a unified format, facilitating subsequent sliding window segmentation and convolutional modeling. On the premise of setting the window size L, the enter pattern tensor is constructed:

$$:{stackrel{sim}{X}}_{i}={{x}_{i},{x}_{i+1},…,{x}_{i+L-1}}$$

(2)

Time segments with a size of L are fashioned and used as inputs for community coaching. The dimension of the enter tensor is L×d, the place L denotes the variety of time steps and d represents the function dimension. This technique preserves native temporal dependency information, enabling the convolutional layer to seize state change patterns within the time sequence.

Its dimension is (:{mathbb{R}}^{Ltimes:d}), which is used for subsequent community coaching. In the a part of function modeling, the model adopts multi-layer one-dimensional convolution construction to extract the native change sample within the operating state of the system. Convolution operation can successfully seize the evolution traits between successive time slices, and this course of is expressed by the equation:

$$:{h}_{t}=sigma:left(sum:_{i=0}^{k-1}:{W}_{i}^{left(1right)}cdot:{x}_{t+i}+vibrant)$$

(3)

(:{h}_{t}) represents the eigenvector output by convolution operation at time step t, and represents the state mode of native time section. the place okay is the width of convolution kernel. σ(⋅) represents the nonlinear activation operate. W(1) and b are the parameters of convolution layer. The convolution operation is used to seize native patterns, whereas the pooling layer is employed for dimensionality discount and enhancement of salient options. By stacking a number of layers of convolution and pooling, the model can study multi-scale function representations of system states. Subsequently, the convolutional options are mapped to a state illustration vector, which integrates the time-series information inside the complete window and serves because the enter for subsequent classification.

$$:z=textual content{R}textual content{e}textual content{L}textual content{U}({W}^{left(2right)}cdot:h+{b}^{left(2right)})$$

(4)

H denotes the function vector obtained from the convolution-pooling operation of the earlier layer, which comprises multi-scale native information in regards to the system state. (:{W}^{left(2right)}) represents the burden matrix of the totally linked layer, used to map the enter function vector to the state illustration area. (:{b}^{left(2right)}) denotes the bias vector of the totally linked layer, which adjusts the mapped output and enhances the model flexibility. ReLU(⋅) stands for the rectified linear unit activation operate, which introduces non-linearity and permits the model to seize non-linear relationships between advanced options. Z represents the state illustration vector output by the totally linked layer, serving because the enter to the classifier. With a dimension of p, it summarizes the worldwide options of the present system state.

Then, the state vector is enter into the Softmax classifier to guage the state class or operation mode of the present system:

$$:widehat{y}=textual content{S}textual content{o}textual content{f}textual content{t}textual content{m}textual content{a}textual content{x}({W}^{left(3right)}cdot:z+{b}^{left(3right)})$$

(5)

(:widehat{y}in:{mathbb{R}}^{C}). C signifies the variety of classes of system standing or occasions. z is the output vector of the totally linked layer from Eq. (4), which comprises the worldwide illustration of the system state. (:{W}^{left(3right)}) denotes the burden matrix of the classification layer, mapping the state illustration vector to the rating area of every class. (:{b}^{left(3right)}) represents the bias vector of the classification layer, used to regulate the class scores. Softmax(⋅) stands for the multi-class normalization operate, which converts the rating of every class right into a chance distribution, guaranteeing the sum of possibilities of all classes equals 1.

In the coaching stage, the model is optimized by cross entropy loss operate to reduce the deviation between the prediction label and the actual state. However, the model not solely outputs the classification outcomes of the system, but in addition maps the state end result (:widehat{y}) into particular optimization behaviors or management directions by means of the technique mapping mechanism. This coverage mapping operate is outlined as:

$$:pi::{mathbb{R}}^{C}to:mathcal{A},a=pi:left(widehat{y}proper)$$

(6)

(:mathcal{A}) represents the non-obligatory motion area of the system, and a represents the really helpful decision-making habits on the present second.

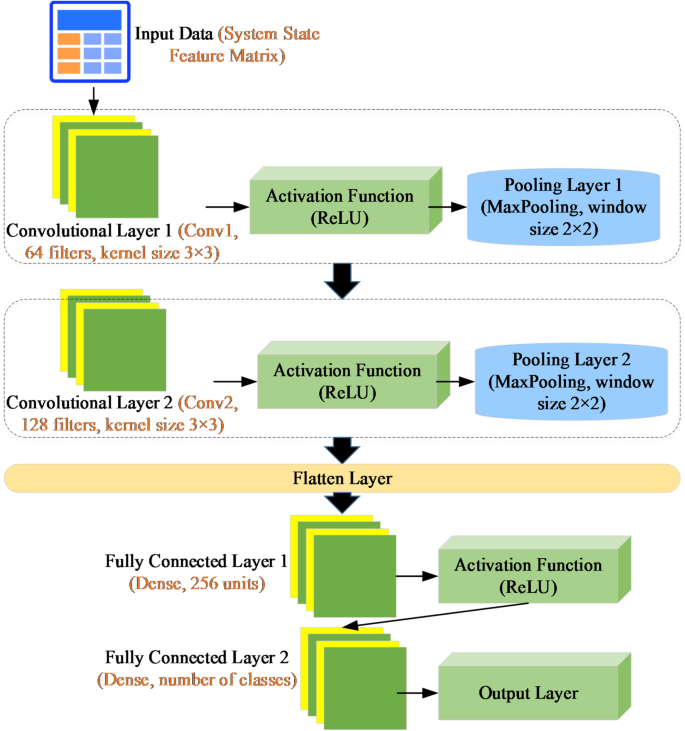

This course of demonstrates the model’s full transformation functionality from state recognition to technique output. To allow closed-loop optimization in real-world techniques, the system’s new state, after executing the present technique, is re-entered into the info acquisition course of. This serves as enter for subsequent iterations of model coaching, forming a steady studying loop. The NCS model consists of two convolutional layers, two pooling layers, two totally linked layers, and a Softmax output layer. The construction is illustrated in Fig. 2. The enter layer receives time-series information processed by sliding home windows, guaranteeing every pattern comprises multi-dimensional options of L consecutive time steps. Two convolutional layers are used to extract low-level and high-level native options respectively: the primary convolutional kernel captures the essential patterns of the unique enter, whereas the second additional abstracts extra advanced temporal dependencies. The convolution operation can successfully seize the evolutionary options of steady time segments. A pooling layer is supplied after every convolutional layer. Through max-pooling or average-pooling, it compresses the function dimension, highlights salient patterns, reduces computational load, and concurrently improves robustness towards native disturbances. Two totally linked layers are accountable for mapping the options after convolution-pooling to the state illustration area, integrating multi-scale native options into a world illustration to offer enter for classification. The Softmax output layer is used to output the chance distribution of the system’s present state or occasion class, realizing state classification. The classification result’s used to judge the system’s working standing and serves because the enter for the technique mapping module.

Optimizing technique technology and suggestions mechanism

After finishing system state modeling, the model should remodel prediction outputs into concrete optimization methods or scheduling directions to information real-time changes and useful resource reallocation within the information system. To obtain this, a technique mapping mechanism is launched following the NCS output, establishing a dynamic linkage between “state recognition” and “action decision-making”. Combined with the system suggestions loop, this allows a data-driven adaptive optimization cycle. This course of is illustrated in Fig. 3.

In Fig. 3, within the coverage technology section, the state classification end result (:widehat{y}in:{mathbb{R}}^{C}) output by the model represents the present operation class or irregular kind of the system. This output is used because the enter of the coverage technology operate π(⋅) and mapped to the executable motion area (:mathcal{A}). The technique of coverage technology might be formalized as:

$$:a=pi:(widehat{y},theta:),pi::{mathbb{R}}^{C}to:mathcal{A}$$

(7)

the place a represents the generated optimization operations (resembling job migration, useful resource allocation, precedence rearrangement, and so on.), and θ is an adjustable coverage parameter. To improve the sensible adaptability of the technique, the π operate not solely is determined by the classification outcomes, but in addition might be dynamically adjusted in response to the present system context setting (resembling load stress, working value, delay index, and so on.), which is embodied as follows:

$$:a=textual content{a}textual content{r}textual content{g}underset{ain:mathcal{A}}{min}:{mathcal{L}}_{decide}(a,mathcal{E})$$

(8)

(:{mathcal{L}}_{decide}) represents the optimization goal operate, resembling minimal delay, most throughput and minimal power consumption. (:mathcal{E}) represents the outline vector of the present system setting state. In the implementation technique of the Policy Mapping Module and Decision Execution Block, the expected state vector is first transformed into an motion embedding by means of a totally linked community. The similarity matching is carried out with a predefined motion prototype library to generate a set of candidate actions. The candidate actions endure rule-based filtering primarily based on system constraints and load situations, together with useful resource capability limits, precedence constraints, and migration prices. For circumstances the place a number of non-obligatory actions exist for the identical state, the module introduces a reinforcement learning-based on-line fine-tuning technique to rank and choose actions. The reinforcement studying reward is pushed by quantitative indicators of coverage execution results (resembling scheduling success fee, common response time, and enchancment in useful resource utilization), aiming to reinforce the adaptability and stability of insurance policies in dynamic environments. The actions output by the coverage mapping module embody the really helpful operation a, and the motion confidence and value analysis, offering steering for subsequent execution.

Subsequently, the decision execution block converts high-level actions into low-level system operations. In the dataset experiments of this research: Task rescheduling is realized by migrating containerized duties between out there nodes primarily based on a precedence queue mechanism. Resource reallocation is achieved by dynamically adjusting CPU and reminiscence quotas by means of the Kubernetes controller. Anomaly warning is accomplished by triggering threshold alarms and recording them within the monitoring dashboard. This module ensures that insurance policies can really drive the system to carry out optimization operations whereas sustaining real-time responsiveness.

After execution, the brand new state of the system re-enters the info assortment course of and serves as enter for the following spherical of model coaching and coverage fine-tuning, thereby finishing the closed-loop cycle of “state recognition – policy generation – action execution – feedback learning”. This mechanism permits the model to own high-precision state classification capabilities and successfully convert classification outcomes into executable insurance policies. Moreover, it maintains robustness and adaptive optimization capabilities in advanced environments resembling dynamic hundreds, information loss, or sudden peaks.

In this course of, the system suggestions mechanism not solely includes gathering post-execution state information, but in addition incorporates quantitative analysis metrics of execution effectiveness, resembling technique profit scores, job completion effectivity, and adjustments in useful resource utilization. For occasion, if a selected technique repeatedly leads to unfavourable suggestions (e.g., persistent useful resource bottlenecks), the model can robotically replace the parameters of the mapping operate π(⋅), or set off a retraining technique of the technique layer.

To improve long-term stability and adaptability of the system, a dynamic technique replace mechanism can be designed. When the system detects a distribution shift in enter information past a predefined threshold or a major decline in technique effectiveness, the model enters a retraining section, extracting options from the newest samples to reconstruct the technique mapping community. This feedback-update interplay ensures the model’s robustness and steady optimization functionality in non-static environments.

The model integration and system deployment scheme

After finishing the model design and closed-loop mechanism building, a key measure of its sensible worth lies in whether or not the model might be effectively deployed into real-world information techniques and function reliably. To this finish, a deployable system integration answer is proposed, masking deployment structure, model integration interfaces, runtime optimization, and system adaptability.

In phrases of deployment structure, the proposed model helps each modular and distributed deployment methods. Modular deployment fits small- to medium-scale information techniques, the place the model might be embedded into the system’s present scheduling or monitoring layer, enabling speedy integration and technique management. For large-scale and high-concurrency techniques, a distributed structure is really helpful: information acquisition and preprocessing, model inference, and technique execution modules are individually deployed throughout edge and central nodes. This design balances real-time efficiency with environment friendly system useful resource utilization. For resource-constrained edge units or embedded techniques, the model might be compressed by way of light-weight methods resembling model pruning and parameter quantization to enhance inference pace and cut back {hardware} dependency.

To facilitate clean integration with present techniques, a standardized information interface and technique invocation interface are designed. On the info consumption aspect, the model interfaces with system logs, databases, and sensor platforms by means of unified information formatting specs, guaranteeing consistency and completeness of enter. On the output aspect, the model’s scheduling or management choices are translated into system-recognizable opcodes or scripts, that are then transmitted by way of interfaces to the working system, useful resource scheduler, or clever management platforms to allow automated execution. Additionally, a number of optimization methods are employed to enhance runtime effectivity. These embody limiting convolutional layer depth and parameter quantity, utilizing 1D convolutions as a substitute of typical 2D ones, and making use of ReLU activation capabilities alongside Batch Normalization to scale back computational overhead.