The previous promise of Moore’s Law is easy: Every two years, the future will arrive in the type of quicker microchips. For many years, the semiconductor trade delivered that progress. But that period is fading. Today, efficiency good points are tougher to obtain, power calls for are surging, and the concept of a single chip doing every little thing properly is beginning to break down.

That is the world Aman Arora is working in and pushing ahead.

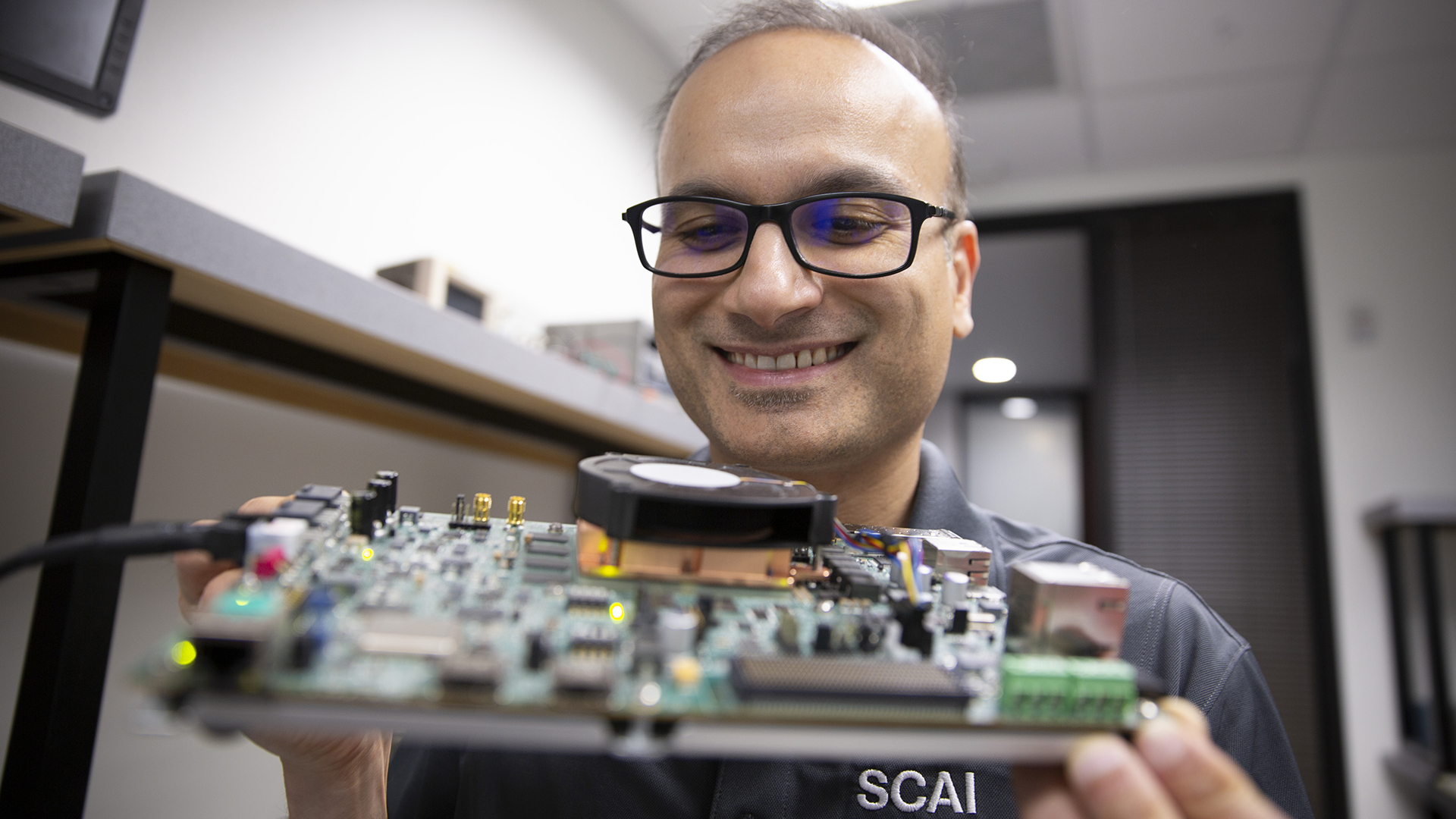

Arora is an assistant professor of laptop science and engineering in the School of Computing and Augmented Intelligence, a part of the Ira A. Fulton Schools of Engineering at Arizona State University. His analysis in reconfigurable computing sees the subsequent wave of synthetic intelligence, or AI, acceleration not in pricey, hotter general-purpose processors, however in {hardware} that may be focused to the job at hand.

Arora’s device of alternative is the field-programmable gate array, or FPGA, chip, which might be reshaped after it leaves the manufacturing unit.

“Think of an FPGA as a giant breadboard, an electronics platform where you can wire components together, shrunk down into a tiny chip,” Arora says. “You can connect different components however you want, and it becomes whatever kind of circuit you need.”

The unintentional engine of AI

The chips that energy AI immediately weren’t constructed for AI in any respect. They have been created for video video games.

Graphics processing models, or GPUs, have been designed to render on-line worlds, delivering convincing shows of lighting, shadows and textures. With some adaptation, GPUs rapidly grew to become the engine behind trendy AI, powering every little thing from picture recognition to massive language fashions.

But they have been by no means designed for what got here subsequent.

GPUs are constructed to deal with huge batches of labor directly. They excel at coaching AI fashions in sprawling knowledge facilities, the place efficiency is measured in how a lot might be processed over time.

But exterior the knowledge heart, the drawback modifications. Increasingly, AI is anticipated to reply immediately — to reply a query, detect a medical anomaly, information a automobile or interpret a sign in actual time. That stage, often called inference, usually prioritizes pace over scale: one request, one reply, as quick as attainable.

That is the place the cracks begin to present.

The shape-shifting chip

Even the most superior GPUs nonetheless depend on the identical primary rhythm as conventional processors: fetch directions, decode them and execute them. They additionally should consistently transfer knowledge forwards and backwards by reminiscence.

That overhead provides up. And in a world the place AI is transferring into telephones, sensors and edge gadgets, it is usually an excessive amount of.

“With an FPGA, there is no instruction decode, no instruction fetch happening,” Arora says. “So no overhead.”

Instead of following directions step-by-step, the chip is configured to carry out the job straight. Most processors go away the manufacturing unit with their inner wiring mounted in place, and designing a brand new chip for each job can be far too sluggish and costly.

An FPGA is completely different. Its inner connections might be reprogrammed after it’s fabricated. Load a brand new configuration, and the chip reshapes itself right into a circuit constructed for that particular job.

Arora is utilizing that flexibility in two methods.

In one route, his lab is utilizing FPGAs to make AI methods extra environment friendly in the actual world. In medical purposes, his staff is developing methods for glucose monitoring that may run repeatedly with minimal power use. In quantum computing, they’re constructing {hardware} to interpret extraordinarily delicate indicators, utilizing machine studying to decide whether or not a quantum system is studying as a zero or a one. Without that step, the outcomes can’t be trusted.

In the different route, Arora is utilizing AI to enhance the chips themselves. Designing an FPGA means selecting from hundreds of thousands of attainable methods to configure the chip. His analysis group is utilizing machine studying to slender these selections, serving to engineers discover higher designs quicker.

Built to adapt

The outcome is a shift towards {hardware} that is not simply specialised, however adaptable.

Already in use by companies like Microsoft, FPGAs might be reconfigured as wants change, with out being changed totally. That makes them particularly useful in locations the place {hardware} can’t be simply up to date and methods want to adapt to altering calls for. The chips are broadly utilized in protection methods and area missions, the place excessive efficiency is crucial and changing {hardware} isn’t a easy possibility.

It additionally modifications the economics and environmental value of computing. Instead of discarding {hardware} each few years, the identical chip might be repeatedly repurposed, lowering each power use and the want for brand new manufacturing. And as AI infrastructure scales, slicing wasted computation is turning into simply as vital as enhancing efficiency.

“Some technology companies are buying nuclear power plants to sustain the growth in AI,” he says. “FPGAs are a much more energy-efficient alternative.”

For Arora, the objective is to rethink how know-how works collectively. The way forward for AI, he argues, will come from designing {hardware} and software program aspect by aspect with methods constructed for particular duties, however versatile sufficient to evolve. It is a shift away from the concept of a single, ever-faster machine and towards a device equipment of methods.

In that world, the strongest laptop is probably not the one that may do every little thing. It could also be the one that may adapt in ways in which matter.

Why this analysis issues

Research is the invisible hand that powers America’s progress. It unlocks discoveries and creates alternative. It develops new applied sciences and new methods of doing issues.

Learn extra about ASU discoveries which might be contributing to altering the world and making America the world’s main financial energy at researchmatters.asu.edu.